This post continues our series looking at innovative assistive technologies. The last post discussed some novel techniques for personalising interfaces to their users and devices. This post discusses an adaptation that is much closer to making it to a device near you…

As someone who is sensitive to light levels, I have often found features of software or devices that reduce the brightness of the screen to be helpful. A common means of doing this is via an “invert colours” feature, which flips the bits that make up the pixels on the screen, and you end up with a screen where all the colours are the opposite—white turns to black, light colours turn to dark colours.

Altered meaning

However there is a problem with this situation: it’s not just the brightness that is inverted; it’s the hues too. This makes makes all photos of people look like X-Rays (or like they’re being exterminated by Daleks), which may look cool at first glance, but really can distort our perception and ability to make sense of the images. It also changes the meanings of things: “everything’s OK” affirmative tick/check marks become pink, and red “danger/error” indicators become harmless light cyan/blue.

Invert brightness

There are a few solutions to this problem. My first exposure to “invert brightness” was from a university research project that had a proof-of-concept filter that ran on Windows, though alas the website was lost to time. Fortunately, some mainstream assistive technologies provide this feature too, and whilst it sounds like “invert colours” it’s actually a world apart, because things look just-about right, having the same hue, but a different level of light. The tone of a graphic design, and the meanings of symbols, are not lost.

Android provides this feature (it actually calls it “colour inversion” though it is in fact doing the right thing and inverting the brightness!) and it would be great if other platforms could adopt it too.

The only drawback of inverting brightness over the entire screen is that it doesn’t provide a fully natural solution for photographs—the sky being dark and the ground being light looks unusual, even if the colours are correct. The best way around this is to simply leave photos as they are, but invert the brightness of the other areas of the screen.

Current approaches for photographs

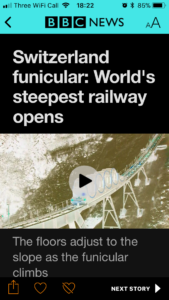

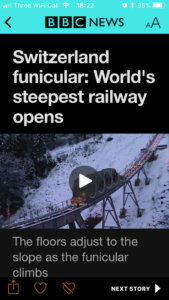

Alas I’m not aware of any other platform that offers that combination of features. Chrome has a High Contrast extension that leaves images as they are, whilst inverting the colours of other content on the page. Apple’s iOS 11 has a similar feature called “smart invert” that also leaves media alone and inverts the other colours on-screen. This does help a lot with images, but it’s still using colour, rather than brightness, inversion, for the rest of the screen, so the problems discussed above with altered design and meaning still apply, as the following images demonstrate.

(You may notice that in the “smart invert” scheme, the status bar text at the very top of the phone screen is kept white. This would have contrasted well with the original, red, branding at the top of the story, but is difficult to read against the colour-inverted, cyan, branding. Whilst it’s laudable that the smart invert approach has been created and continues to be developed, this reinforces the importance of inverting brightness over colour.)

It would be great if Android’s brightness inversion could be combined with Chrome & Apple’s approach of leaving images out of the changes. This would preserve the look and feel, and meaning, of a design, as well as keeping images accessible.

Performance considerations

There’s a reason why, historically, colour inversion has been used. It’s because the value of any pixel is simply negated (which turns dark pixels light, and light pixels dark, as well as altering the colour). This is a very quick operation for the CPU/GPU to perform, so is still efficient. The problem comes because pixels use the Red-Green-Blue (RGB) colourspace, so simply flipping the value affects both colour and brightness, hence the problems described above. Both the code and the computational effort are kept simple.

The more apt solution of inverting only the brightness requires looking at the colour values in a different colourspace, such as Hue-Saturation-Luminance (HSL). Doing this allows adjustment of the brightness/lightness whilst preserving the hue (the actual colour). The challenge with this approach is that it is much more computationally expensive, because each pixel’s RGB value must be converted to HSL, be adjusted, and then be converted back to RGB, which would affect performance and battery life. The traditional solution, when important operations are too slow slow on general-purpose CPUs and GPUs, is to create a specific hardware circuit to solve those problems. This has been done to enable small devices such as phones and tablets to effortlessly decode high-resolution video, and perform encryption/decryption, for transmitting information securely. However, the catch there is that creating custom hardware is extremely expensive, so it is only done when there’s no other alternative.

It may be that some of the graphics hardware in devices these days can be used to alleviate some of the computational burden of inverting brightness—perhaps the emergence of this feature on Android, and the emergence of smart invert (for colours) on iOS means this is already happening, or simply that the trade-off of battery life in exchange for a dramatically improved experience for light-sensitive users is considered worth it. Android flags this feature as experimental, so hopefully they are collecting some data on its performance to help tune it, and we’ll continue to be able to use it in future releases.

There is another way that this problem could be solved, which may require slightly more design time up-front, but no extra processing: “dark mode”. Several apps already provide a mode of operation for use in low-light environments that basically inverts the brightness of the screen, but this is done by the designers of the app having created that colour scheme for the UI and writing the code to apply it. It’s often rumoured that iOS will eventually contain a global “dark mode” setting, and if so, Apple could provide the APIs and development tools to make it easy for app developers to support this. On recent Android releases, the launcher UI adapts to differing light levels, so it’s possible this too could be extended to apps once the API has matured.

In closing

The general, CPU-hungry solution is empowering to users because they can apply it to any app or website, so it is valuable and should be made more widely available on all platforms. However, it would be great if developers were to be given improved APIs by those platforms in order to create alternative UI themes, as this would be considerably more efficient, and would generally raise awareness and consideration of how light levels can affect UIs and users.

These are exciting times for assistive technologies; the future’s bright (or otherwise, as we users see fit).

Comments

Invert brightness is a double-edged sword. It can provide better overall idea of user interface, but completely distorts photos and pictographic icons. In b&w, negative photos are unpleasant, but not altogether terrible as are color negatives. I think color manipulations should be done on UI toolkit level (CSS, XAML etc.), since you can have inverted vital part of UI, but can avoid inverting colors on some icons, photos, illustrations, etc.

And when I’m at it, we need meta-colors for bitmaps, so it’s possible to create icons, where actual colors are determined by UI settings. Meta-colors are well possible in SVG/CSS, but vector formats aren’t too often used for icons

Too | long will take for | or | low chances that | most developers will place attention on such (actually really important) aspect of their apps like “dark mode”, so hope that will be created program | s that will allow to inverse brightness of any chosen program’s interface or screen area, this would be one of “always on” programs on my, and sure on many other devices.